Last updated: 7 March 2026

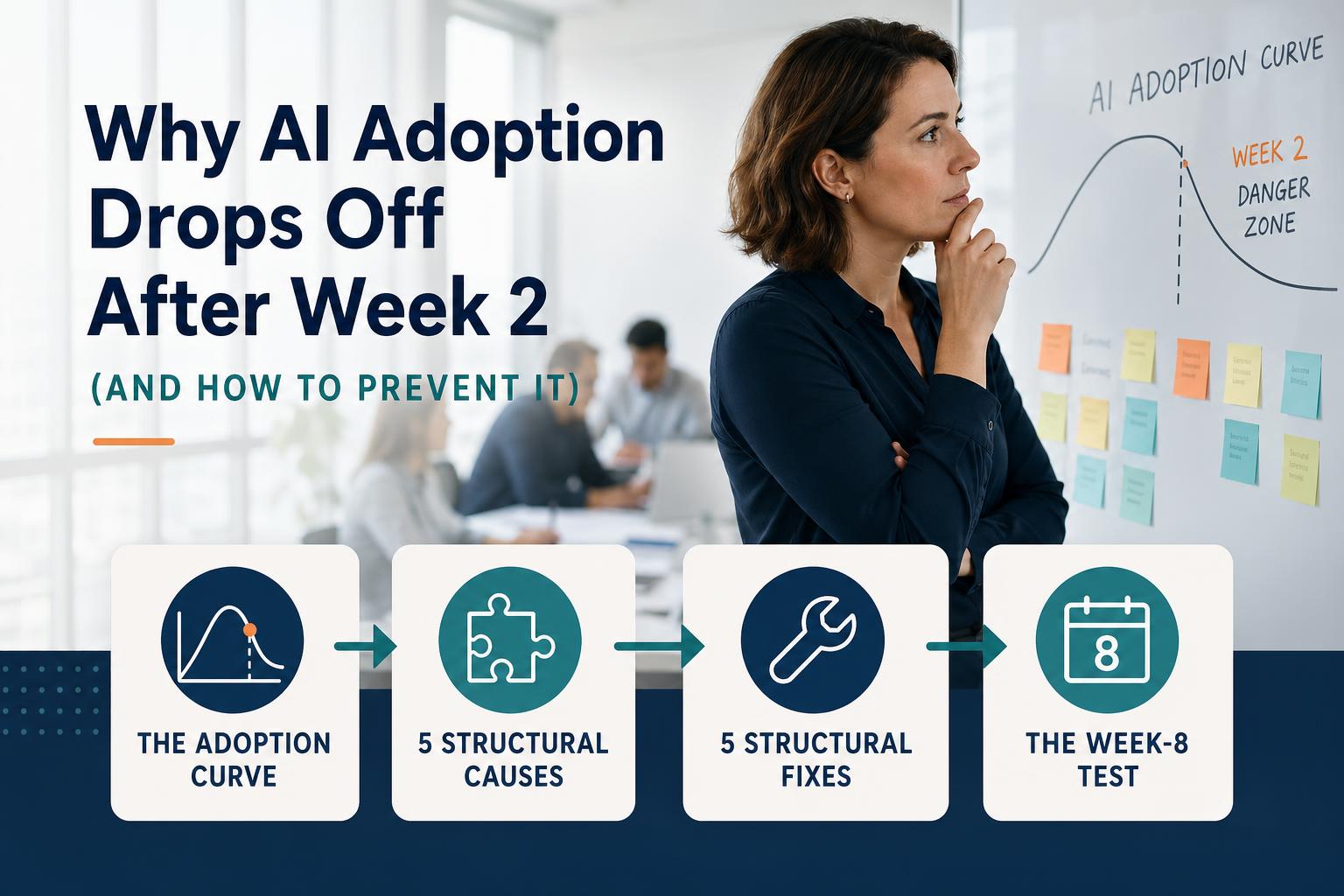

Why AI adoption drops off after week 2 — and the five fixes that prevent it

Most AI enablement programs follow the same trajectory: strong initial interest, a productive week one, declining usage through week two, and a return to old habits by week three. This pattern is not random — it has five specific structural causes, each with a specific fix. This guide explains the drop-off mechanism and what to build into your program design to prevent it.

The adoption curve: why week 2 is the danger zone

The adoption drop-off pattern in AI enablement programs is well-documented and follows a consistent shape. Week one sees high engagement — the novelty effect, the kickoff energy, the early wins from managers who experiment and find the tools useful. By week two, the novelty has worn off and the real test begins: will managers integrate the AI workflow into their actual workday, or will the existing routine reassert itself?

The existing routine almost always wins, for one simple reason: it requires no effort. Using an AI workflow requires remembering to use it, knowing how to use it well, and having the prompt or template accessible at the moment the task arises. When any of these three conditions aren't met, the manager defaults to what they've always done.

By week three, managers who didn't achieve the habit in week two are effectively back to baseline. By week four, the program looks like it "didn't stick" — even if the AI tools are demonstrably better for the tasks they were designed for.

The drop-off is not a motivation problem. It's a design problem.

Five structural causes of adoption drop-off

1. The workflow isn't embedded in the manager's natural work rhythm

If using AI requires opening a separate application, finding a saved prompt document, or switching away from the task where the need arises, friction accumulates. The manager who needs to write a status update on Friday afternoon will not, under time pressure, navigate to a separate AI tool. They'll write the update the way they always have.

Adoption that survives past week two requires the AI workflow to be accessible at the exact moment the relevant task arises — in the email client, the document, the Teams channel, or wherever that task actually lives.

2. No accountability structure after week one

In week one, the kickoff creates social accountability — managers are expected to try the tools as part of the program launch. In week two, that expectation disappears unless it's explicitly maintained. Without a visible adoption metric, a weekly check-in, or a coaching touchpoint, the behavioral expectation evaporates and managers revert to baseline.

Most AI enablement programs do not build accountability into weeks two and three. They run a launch event and then wait to see what happens. What happens is the drop-off.

3. Prompts that worked in the demo don't work in the manager's real context

Managers who try a prompt in a workshop and get a good result are motivated to use it again. Managers who try the same prompt in their actual work context and get a poor or inconsistent result lose confidence quickly and stop experimenting. This is especially common when generic prompts are deployed without customization for the specific role, team context, or output format the manager actually needs.

A manager who gets three inconsistent results from a prompt in week two will not use that prompt in week three. Prompt failure early in the program is one of the most reliable predictors of adoption drop-off.

4. Too many workflows deployed simultaneously

Programs that try to change five to ten manager behaviors in week one create cognitive overload. Managers can't form habits for ten new behaviors at once — they form habits for zero, because the cognitive load of tracking ten new things is higher than the perceived benefit of any individual one.

Two or three well-designed, high-value workflows deployed deeply are more effective than ten workflows deployed shallowly. Depth of adoption on two workflows produces real productivity gains. Shallow awareness of ten workflows produces no behavior change.

5. Early wins aren't captured and shared

Social proof within the cohort is one of the most powerful adoption drivers in weeks two and three. When a manager hears from a peer — not from a vendor — that an AI workflow saved them 45 minutes on Friday's report, they are more likely to use the workflow themselves. When every week goes by without capturing and sharing these wins, the social proof dynamic never activates.

Most AI programs don't have a mechanism for capturing and amplifying early wins. The L&D team knows anecdotally that some managers are having success, but no one is collecting those wins and sharing them with the rest of the cohort in a structured way.

Five structural fixes

Fix 1: Embed workflows in existing tools, not new platforms

Deliver AI workflow prompts as pinned messages in the team's Slack channel, as saved prompts in Microsoft 365 Copilot, or as a shared Google Doc linked from the team's weekly planning meeting template. The goal is zero additional navigation — the prompt is accessible at the moment the task arises, in the environment where the manager is already working.

Fix 2: Build a weekly adoption cadence with visible metrics

Track adoption rate weekly — the percentage of the cohort using each target workflow at least three times. Share this number with the cohort at the weekly check-in. Not as performance management, but as a team progress metric: "We're at 58% adoption on the status report workflow this week — here's what's helping the people who are using it consistently." Managers respond to team progress data differently than they respond to individual performance pressure.

Fix 3: Run a prompt quality review at the end of week 1

After the first week of real-world usage, collect output samples from five to ten managers and review them against the target output quality. Identify any prompts that are producing inconsistent or low-quality results and redesign them before week two. A prompt that works reliably in real context produces positive reinforcement. A prompt that works sometimes produces frustration and abandonment.

Fix 4: Two workflows first, others later

Launch with the two highest-value workflows for the cohort. Hold all other workflows until week-two adoption of the first two is above 70%. Adding more workflows before the first set is established dilutes attention and reduces the depth of habit formation on any individual workflow.

Fix 5: Capture and share three wins per week

Designate someone — the L&D lead, the program manager, or a rotating cohort member — to collect three specific win examples per week. Format: "Person role used [workflow] for [task], result was [specific time saving or quality improvement]." Share at the weekly check-in. Three wins per week for four weeks builds a body of peer evidence that is more persuasive than any vendor data.

The week-8 test: how to know if adoption is sustained

The real test of an AI adoption program is not week four — it's week eight. Week four measures whether the behavior changed during the program. Week eight measures whether the behavior persisted after the program ended.

Run a lightweight adoption check at week eight: what percentage of the cohort is still using the target AI workflows at least three times per week? Benchmarks:

- Above 65%: The habit has formed. The workflow has become part of the manager's default approach to the task.

- 50–65%: Partial adoption. Some managers need a reinforcement touchpoint — typically a 15-minute re-engagement conversation identifying what's blocking continued use.

- Below 50%: The habit didn't form. This indicates either a prompt quality problem, a friction problem (the workflow isn't accessible enough), or an accountability gap in the weeks after the sprint ended.

A week-eight adoption rate above 65% is the signal that the productivity gains from the pilot are durable, not temporary. It's also the data point that justifies expanding the program to the next function — the CFO wants to see that the first cohort sustained the behavior before approving the next.

Sources and further reading

- BJ Fogg, Tiny Habits: The Small Changes That Change Everything — behavioral habit formation research

- Prosci, Best Practices in Change Management — organizational change adoption benchmarks

- MIT Sloan Management Review, Overcoming the Adoption Gap in Enterprise AI